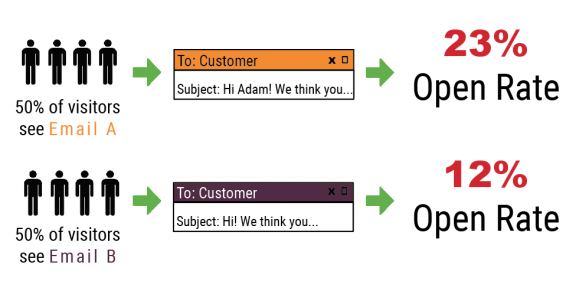

A/B testing, also known as split testing, is the practice of testing different variables on two different assets in digital marketing. Assets for split testing could be websites, landing pages, emails, or even advertising sets (think: Facebook advertising). Why are you doing A/B testing? To see which asset one performs the best and what made it happen. The two versions of your asset, Version A and Version B, are shown to similar audiences and contain the same general information but with slight variants. Variants could be different images, text, and call to actions. As you send the two versions into the web, you’ll test the success of each version. Find out which converts more visitors (landing pages), receives more open rates (emails) or generally shows better engagement metrics. Simple enough, right? Find out how to use split testing.

Why Should You A/B Test

It is important to use A/B testing so you know what advertising or marketing works and what needs to change. You might have an image on one landing page that is attracting much more traffic to your website than the image on the other test. Similarly, you might have text in a subject line that increases more “opens” than another subject line version of the same email. For new digital marketing, A/B testing can improve your overall ROI by testing multiple assets and optimizing for the most conversions.

How To Use Split Testing

Just because it’s called A/B testing doesn’t mean that you can only test two variants. There are a number of elements you can test but best practices (see below) include limiting the amount of variation between Version A and Version B. The slight difference will guide you to highest performing variable (call to action button colors, email subject lines, etc). For example, if you have an email list of 3,000 subscribers and want to see which subject line gets the most opens, you can setup an A/B test. You can split test a variety of subject lines into different sets of subscribers to see how your target audience reacts. Not everyone reacts the same to copy so A/B testing will help you resonate with your potential customer. Let’s look at how you’d run this A/B testing on a live website page. If you are testing two versions of a landing page, Version A shows a submit button that reads “CLICK HERE TO GET YOUR COPY” and Version B shows a button “DOWNLOAD NOW TO GET THE EXCLUSIVE”. You can track which submit button worked best with your audience and use that data to create your final landing page.

Top 3 things You Should A/B Test

While A/B testing or split testing is a great tool, it does have more digital marketing strengths in some areas over others. The first key is to know what you’re testing and how to measure your conversion results from an A/B test. Some platforms for email campaign monitoring or landing page building will automate A/B testing for your project. A small segment of your audience will see Version A and another

- Email campaigns

- Landing pages

- Graphic advertisements

Other Assets You Can A/B Test

- Call to actions buttons

- Call to action text

- Images

- Links

- Headlines

- Sub headlines

- Infographics

- Subject lines

- Sending time and date

- Length of emails

- Length of paragraphs

- Color of backgrounds, logos, etc

A/B Testing Best Practices

There are a few guidelines that are helpful when A/B testing. As we mentioned before, you want to limit the variables you change between Version A and Version B. If you change multiple elements at once, there is no definitive way to track what single element increases conversions. Some platforms will encourage a Version C and Version D option as well. Before you take the chance on multivariate testing, get comfortable with the process of A/B split testing first.

Let’s talk about the control group. Though this isn’t a “Bill Nye” science, it is a data science. You need to exercise like a scientist by setting your goals, your methods, the measurements to track, and the results. Testing on a control group (or a sample sized audience) will help you test on a smaller scale and apply the winning version (Version A or Version B based on the success of your test) to the rest of your audience. As with any control group(s), there should be an equal amount of participants in test A as there are is test B.

In our example, we’re going to run an A/B test for email campaigns. If your list has 10,000 subscribers, you should A/B test on 20% of the audience. This leaves a control group for the split testing at 2,000 total. Send Version A to 1,000 subscribers in the control group and at the same time send Version B to the remaining 1,000 subscribers in your control group. Because you’re a great marketing data scientist, you’ll already know what metrics to watch during the test. If you’re running an A/B test on subject lines, watch for the version with the highest open rates over 4 hours. If you’re running an A/B test on a call to action button inside the email, watch for the version with the highest click rates over 4 hours. Determine your most successful version based on the email campaign results and share that winning email with the rest of your 8,000 email subscribers. Remember, it’s important to run your A/B testing session for enough time to collect valuable data. It is critical to collect enough data to get accurate results and ending too early can greatly minimize the accuracy of your test.

Digital marketing has a lot of hidden and useful tools, like A/B testing. The time it takes to create these tests and measure success is great. We understand that you’ve got a furniture store to run successfully. That’s why our growing team of award-winning digital marketing experts is ready to help. See how we can improve your digital marketing through A/B testing, email campaigns, and other digital marketing solutions!